You have a beautiful, modern website. It’s fast, interactive, and provides a fantastic user experience, all thanks to the power of JavaScript. But you have a nagging question in the back of your mind: if all my important content is loaded by scripts, can Google even see it? It’s a critical question, and the answer directly impacts your site’s ability to rank and attract organic traffic. So, let’s tackle it head-on. Can Google index JavaScript-generated content? The short answer is yes. The more accurate answer is yes, but it’s complicated, and there are many ways it can go wrong.

This guide will walk you through everything you need to know about the relationship between JavaScript and Google. We’re going to demystify the process, stripping away the complex jargon to give you a clear understanding of how Googlebot interacts with your site. We will explore the hurdles it faces and, more importantly, provide you with a toolbox of solutions to overcome them. Whether you’re a marketer, a business owner, or a developer, you’ll leave with the confidence to ensure your dynamic content isn’t invisible to the world’s largest search engine.

The Big Question: So, Can Google Really Index It?

Let’s start by putting the main concern to rest. For years, the SEO community operated under the assumption that “if it’s not in the source code, Google can’t see it.” This is no longer true. Google has invested heavily in its ability to process JavaScript.

Google’s official stance, repeated many times by representatives like John Mueller, is that as long as you don’t block the crawler from accessing your JavaScript files, it will generally be able to render your pages and see your content, much like a modern browser does.

However, this “generally” hides a world of complexity. The process isn’t perfect, it isn’t instantaneous, and it’s resource-intensive for Google. This is where the problems begin. Just because Google can index your JavaScript-generated content doesn’t mean it will do so quickly, correctly, or efficiently. Understanding the process is the first step to troubleshooting and optimizing it.

Behind the Curtain: Google’s Two-Wave Indexing Process

The root of all JavaScript SEO challenges lies in how Google processes modern websites. It doesn’t happen all at once. Instead, Google uses a two-wave (or two-phase) system.

Wave 1: The Initial Crawl

- Fetch HTML: Googlebot discovers your URL and requests the page from your server. It downloads the initial HTML file it receives. For a traditional, static website, this HTML contains all the text, links, and content.

- Discover Links: The crawler scans this raw HTML for

<a>tags withhrefattributes and adds any new URLs it finds to its queue to be crawled later. - Initial Indexing: Google indexes the content it can see in this initial HTML. If your site is heavily reliant on JavaScript, this initial file might be almost empty, containing little more than a link to a large JavaScript file and a single

<div id="app"></div>element.

At the end of wave one, Google might only see a blank page. It knows it needs to do more work, so it adds your page to another, much longer queue.

Wave 2: The Rendering Phase

- Queuing: Your page waits in a render queue. The keyword here is waits. Google has to render the entire web, and it has finite resources. The time spent in this queue can be hours, days, or, in some cases, even weeks.

- Rendering: When its turn comes, your page is loaded in the Web Rendering Service (WRS). Think of the WRS as a massive fleet of headless Chrome browsers. It executes your JavaScript, makes API calls, and builds the page just like a user’s browser would.

- Second-Pass Indexing: Once the page is fully rendered, Google can finally see the complete JavaScript-generated content. It crawls this rendered HTML to find the actual text, links, images, and structure of your page. This new content is then added to the index.

The existence of this two-wave system and the delay it introduces is the single most important concept to grasp in JavaScript SEO. It’s the source of nearly every problem we’re about to discuss.

Challenge 1: The Render Queue Delay

The most obvious problem with the two-wave system is the delay. Your content is effectively invisible to Google until it completes the second wave of rendering and indexing.

This is a massive issue for time-sensitive content.

- News Articles: If you publish a breaking news story, you need it indexed in minutes, not days. A rendering delay means you miss the entire conversation.

- E-commerce Sales: Launching a Black Friday sale? If the product details and prices are JavaScript-generated content, they might not be indexed until the sale is already over.

- Job Postings or Event Listings: These have a short shelf life. A delay in indexing means a delay in attracting relevant search traffic.

Even for evergreen content, this delay can slow down your SEO momentum. You publish a new blog post, but it takes a week or more to show up in search results, delaying your ability to gather backlinks and build authority.

Challenge 2: Failed or Incomplete Rendering

What happens if the rendering process in wave two doesn’t work correctly? This is a common and serious problem. If the WRS can’t execute your JavaScript properly, Google may never see your full content.

Here are some common causes of rendering failure:

- JavaScript Errors: A bug in your code can halt execution. While a user’s browser might be forgiving, Google’s WRS might simply stop, leaving your page half-rendered or completely blank.

- Resource Blocking: If your

robots.txtfile accidentally blocks Googlebot from accessing critical.jsor CSS files, it won’t have the resources it needs to build the page correctly. - Network Timeouts: If your JavaScript needs to fetch data from an external API to build the page, and that API is slow or unresponsive, the WRS might time out and give up.

- Unsupported Features: Google’s WRS uses a very recent version of Chrome, so it supports most modern web features. However, it doesn’t support features that require user permissions (like push notifications or webcam access), and this can sometimes cause unexpected errors.

When rendering fails, Google is left with the content it saw in wave one, which might be nothing at all. From an SEO perspective, your page is a blank slate.

Challenge 3: Negative Impact on Performance and Core Web Vitals

User experience is a critical part of modern SEO, and page speed is a huge component of that. JavaScript-generated content often comes at a high performance cost. Large JavaScript files take time to download and even more time for the browser (and Google’s renderer) to parse and execute.

This directly affects Google’s Core Web Vitals, which are direct ranking signals:

- Largest Contentful Paint (LCP): Because the main content can’t be displayed until the JavaScript is executed, this metric is often poor on client-side rendered sites. Users are left staring at a blank screen or a loading spinner.

- Interaction to Next Paint (INP): If the browser’s main thread is busy executing a massive JavaScript file, it can’t respond to user inputs like clicks or taps. This leads to a laggy, frustrating experience.

- Cumulative Layout Shift (CLS): If JavaScript loads content or ads into the page without reserving space for them first, it can cause the existing content to jump around, leading to a high CLS score.

A site that is slow and frustrating for users is also slow and computationally expensive for Google to render. This can lead to a lower crawl budget and negative ranking signals.

Challenge 4: Crawling and Discovering Links

Search engines build a map of the internet by following links. If your website’s navigation and internal links are part of your JavaScript-generated content, they are only discoverable during that second wave of indexing.

This creates two problems:

- Delayed Discovery: Important pages on your site might not be discovered for weeks because the links pointing to them are hidden until rendering is complete.

- Incorrect Link Formats: For a link to be reliably followed by Google, it must be an HTML

<a>tag with a resolvablehrefattribute. Developers sometimes create “links” using other HTML elements like<span>or<div>with anonclickJavaScript event. While these work for users, Googlebot is likely to ignore them, breaking your internal linking structure and trapping “link equity.”

If Google can’t easily find all the pages on your site, it can’t index them. This is especially true for deep pages in a large e-commerce site or an extensive blog archive.

The Solution: How to Ensure Google Indexes Your JavaScript Content

Now that we understand the problems, let’s focus on the solutions. The goal is to make it as easy and fast as possible for Google to see your full content. The best way to do this is to remove the dependency on client-side rendering.

Solution 1: Server-Side Rendering (SSR) – The Gold Standard

Server-side rendering is the most robust solution to JavaScript SEO problems. With SSR, your server runs the JavaScript and builds the full HTML of a page before it sends it to the browser or Googlebot.

How SSR Works:

- A request comes in for a URL.

- The server executes the necessary JavaScript framework code (e.g., React, Vue).

- It generates the complete HTML for the requested page, with all the content in place.

- This fully formed HTML is sent as the response.

Why It’s Great for SEO:

- No Rendering Delay: Google sees all the content in the very first wave of indexing. There is no need to queue the page for rendering.

- Faster LCP: The browser receives HTML with visible content immediately, leading to a much better Core Web Vitals score.

- Fully Crawlable: All links and content are present in the initial HTML, making them instantly discoverable.

Frameworks like Next.js (for React) and Nuxt.js (for Vue) are built specifically to make implementing SSR much easier. For any new website project where SEO is a priority, SSR should be the default choice.

Solution 2: Static Site Generation (SSG) – The Performance King

If your content doesn’t change with every user request (e.g., a blog, documentation site, or a marketing landing page), you can use Static Site Generation.

How SSG Works:

At “build time” (when you deploy your site), a tool pre-renders every single page of your website into a static HTML file. Your server then just has to serve these simple, pre-built files.

Why It’s Great for SEO:

- Blazing Fast: Serving static HTML is the fastest possible way to deliver a web page. This is fantastic for Core Web Vitals.

- Perfectly Indexable: Like SSR, all content is in the initial HTML. It’s the most SEO-friendly approach possible.

- Highly Secure: With no server-side processes to run per request, the attack surface is much smaller.

Tools like Gatsby, Jekyll, and Eleventy are popular static site generators. Even frameworks like Next.js and Nuxt.js have excellent support for SSG.

Solution 3: Dynamic Rendering – The Workaround

What if you have an existing client-side rendered website and can’t re-architect it for SSR or SSG right away? Dynamic rendering is a potential stop-gap solution.

How Dynamic Rendering Works:

You configure your server to be a “user-agent detector.”

- If the request comes from a real user’s browser, you serve them the normal client-side JavaScript application.

- If the request comes from a known search engine bot (like Googlebot), you route that request through a separate service (like Rendertron or Prerender.io) that renders the page and serves a static HTML version to the bot.

Google supports this method, but it comes with its own challenges. It adds complexity to your infrastructure, can be difficult to maintain, and runs the risk of showing different content to Google than to users (cloaking), which you must be very careful to avoid. It is best seen as a temporary bridge while you work towards a true SSR or SSG solution.

How to Check if Google Can Index Your JavaScript-Generated Content

You don’t have to guess. Google gives you the tools you need to audit your site.

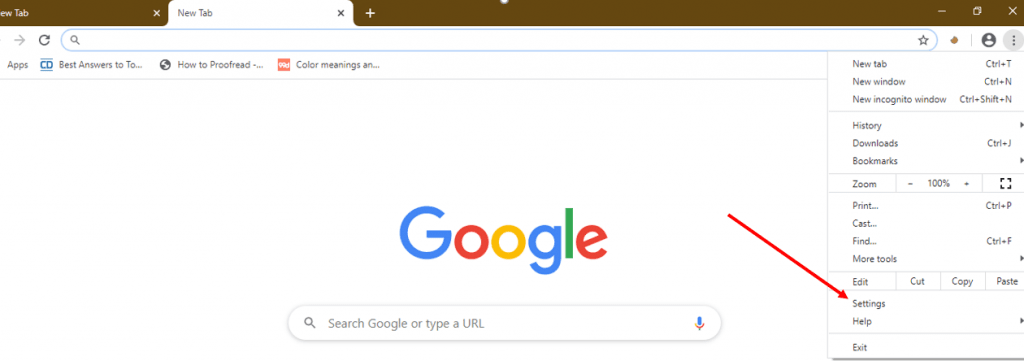

- Google Search Console – URL Inspection Tool: This is your number one tool.

-

- Enter a URL from your site and click “Test Live URL.”

- This will run Google’s renderer on your page in real-time.

- Once it’s done, click “View Tested Page.”

- Check the Screenshot: Does it look like your fully rendered page, or is it blank/incomplete?

- Check the HTML: Look at the “HTML” tab. Search for a unique sentence from your content that is loaded by JavaScript. If you can find it in the tested HTML, Google can render it.

- Check for Errors: Look at the “More Info” tab for JavaScript console errors or resources that failed to load. These are your clues for debugging.

- Google’s Mobile-Friendly Test: This tool also uses the same rendering engine and provides a quick and easy way to see a screenshot of your rendered page.

- Search for a Snippet of Text: Copy a unique sentence from your JavaScript-loaded content and search for it on Google enclosed in quotes (e.g., “This is a unique sentence from my product description”). If your page shows up in the results, congratulations, Google has successfully rendered and indexed that content.

Conclusion: Partnering with Google for a Visible Web

So, can Google index JavaScript-generated content? Absolutely. But the real question is, are you making it easy or hard for Google to do so?

Relying on Google’s client-side rendering capabilities is a gamble. It introduces delays, risks of failure, and performance penalties that can hold your site back. The modern approach to technical SEO is to take control of the rendering process yourself.

Your action plan should be:

- Audit: Use the URL Inspection Tool to understand how Google currently sees your most important pages.

- Advocate: Work with your development team. Make them aware of the SEO implications of their architectural choices. For any new project, make the case for Server-Side Rendering or Static Site Generation from day one.

- Optimize: Whether you can change your rendering strategy or not, focus on performance. Optimize, minify, and defer your JavaScript to improve Core Web Vitals. Ensure all your links are in a crawlable format.

- Monitor: Keep an eye on your indexing status in Google Search Console. Regularly test new page templates to ensure they are rendering correctly.

By bridging the gap between development and SEO, you can build incredible, dynamic user experiences that are also fully visible, indexable, and ready to rank at the top of the search results.