This article covers a fresh batch of 2026 platform updates that show where AI and ad ecosystems are heading next. It explores Google’s decision to remove its “What People Suggest” health feature while expanding AI-powered video tools on YouTube, Canva’s new Magic Layers system for breaking flat AI images into editable layers, Meta’s in-stream ad label change from “Sponsored” to “Ad,” Nano Banana Pro’s arrival in Google Ads for AI-generated visuals, Nvidia NemoClaw enterprise agent distribution built on OpenClaw with security guardrails, and LinkedIn’s refreshed feed algorithm powered by more advanced AI ranking.

Google Removes “What People Suggest” and Leans Into Health AI Video

Google has removed its “What People Suggest” feature, an AI-driven element that summarized crowdsourced health advice, following concerns over misinformation and content reliability in sensitive medical contexts. The feature aggregated and distilled user-generated tips, but in health, where accuracy, evidence, and regulatory expectations are high, relying on loosely vetted crowd input risked surfacing advice that wasn’t clinically sound.

In place of this, Google is emphasizing authoritative health content and expanding AI capabilities within YouTube, particularly around interactive tools for health-related videos. These new video-first experiences are designed to help users engage with trusted health information more deeply, for example, through structured explanations, contextual prompts, or guided navigation inside long-form content, rather than relying on anonymous crowdsourced summaries.

For health brands and publishers, the signal is clear: getting featured in AI surfaces will increasingly depend on authority, quality signals, and structured video content, not clever social Q&A hacks. For users, it’s a move toward safer AI behavior in high-stakes domains.

Canva Magic Layers: Turning AI Images into Fully Editable Designs

Canva has introduced Magic Layers, a feature that takes flat images, including AI-generated visuals, and separates them into layered, fully editable designs. Instead of treating an AI-rendered image as a single, locked bitmap, Magic Layers identifies individual design components such as objects, text elements, and graphic elements, and reassembles them into editable layers while preserving the original layout.

Launching in public beta in the U.S., U.K., Canada, and Australia, Magic Layers allows designers to click into an AI image and move, edit, or delete specific components without rebuilding the layout from scratch. That means teams can:

-

Remove or swap objects while keeping the composition intact.

-

Adjust fonts, colors, and copy on pseudo-“flattened” graphics.

-

Use AI as a starting point rather than a finished product.

Practically, this shifts Canva’s AI from “generate a final image” toward “generate a flexible design base” that human designers can finish, making AI art more usable inside real brand workflows that require precise control.

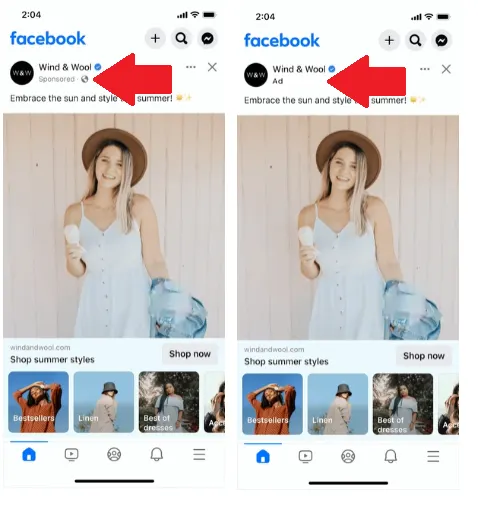

Meta Switches In-Stream Ad Labels from “Sponsored” to “Ad”

Meta is in the process of updating its in-stream ad transparency labels, replacing the long-used “Sponsored” tag with a simpler “Ad” label across its apps. Many users have already seen this on Instagram, where the conspicuous “Sponsored” label under posts is becoming a smaller, clearer “Ad” badge.

The change is part of a broader visual refresh of Meta’s app presentation and likely has several motivations:

-

Clarity for users: “Ad” is shorter and more universally understood than “Sponsored,” especially for non-native English speakers.

-

Consistency across formats: As new ad formats roll out in Reels, Stories, and other surfaces, a unified “Ad” tag standardizes how paid content is disclosed.

-

Subtle design alignment: The smaller label fits into Meta’s evolving aesthetic, while still meeting transparency requirements.

For advertisers, performance impact will need to be monitored—label wording and placement can influence how quickly users spot paid content, which can affect click and engagement behavior. But from a compliance and UX perspective, Meta appears to be aiming for clearer, more consistent disclosure without overly interrupting the visual flow of the feed.

Nano Banana Pro Comes to Google Ads

The much-discussed AI visual tool Nano Banana, which went viral earlier in the year, now has its Pro version integrated directly into Google Ads. Advertisers can access Nano Banana Pro at no additional cost within the platform and use it to generate:

-

Instant on-brand visuals that align with brand colors and style cues.

-

Photo-realistic renderings of products and scenarios.

-

Multi-product images from a few simple text prompts.

This integration pushes Google Ads further into the creative generation space, giving media buyers and small advertisers access to high-quality campaign visuals without needing a separate design team or external tool. It also tightens the loop between text-based campaign input (offers, audiences, positioning) and visual outputs, which can now be produced, tested, and iterated inside the same environment where campaigns are configured.

For creative teams, this means more experiments and variants with less manual overhead. For the industry, it’s one more step toward AI-native ad platforms where targeting, bidding, and creative come under a single, AI-augmented roof.

Nvidia NemoClaw: Enterprise-Grade OpenClaw With Guardrails

At its GTC 2026 conference, Nvidia announced NemoClaw, described as an enterprise-grade distribution of OpenClaw, the fastest-growing open-source AI agent framework to date. NemoClaw bundles:

-

OpenClaw’s agent runtime, which orchestrates autonomous AI agents and tools.

-

Nvidia’s Nemotron models, optimized for agentic workflows.

-

A new open-source security and policy layer called OpenShield, which enforces privacy, network access, and security controls on agents working with corporate data.

NemoClaw can be installed with a single command and runs across environments, from cloud deployments to local RTX PCs and Nvidia’s DGX Spark/Station hardware, making it accessible for both experimentation and production. Nvidia CEO Jensen Huang has reportedly framed OpenClaw as on track to become as foundational as Linux or Kubernetes for agentic systems, with NemoClaw as the enterprise-ready distribution with guardrails.

For enterprises, NemoClaw promises a way to adopt autonomous agents without sacrificing governance: IT teams can define policies around what data agents can access, which tools they can call, and how they interact with internal systems, all while tapping into Nvidia’s hardware and model ecosystem.

LinkedIn Updates Its Feed Algorithm With More Advanced AI

LinkedIn has revised the architecture of its feed algorithm, moving to more advanced AI systems to decide which posts users see and how content is ranked in-stream. While the company has not publicly detailed every change, the direction is clear: deeper AI modeling of:

-

What content is most relevant given a member’s role, skills, and interests.

-

Which engagement patterns (comments, long reads, shares) predict meaningful value.

-

How to surface posts that encourage professional conversation rather than generic virality.

For creators and brands on LinkedIn, this likely means continued emphasis on high-signal interactions, substantive comments, saves, and shares, over low-effort reactions. It also reinforces the trend that feed success depends on aligning with LinkedIn’s evolving quality signals, not just posting frequently. Thoughtful, context-rich posts tailored to niche audiences are likely to benefit most from the upgraded ranking system.

The Common Thread: More Editable, More Governed, More Explicit AI

Across these updates, Google’s health decisions, Canva’s Magic Layers, Meta’s ad labels, Nano Banana Pro in Google Ads, Nvidia’s NemoClaw, and LinkedIn’s feed refresh, a few clear themes emerge:

-

AI outputs are becoming more editable and controllable: Canva Magic Layers and Nano Banana Pro both treat AI content as a starting point, not an unchangeable end product.

-

Guardrails and governance are being built in: Google is removing risky health summarization features, YouTube and others are investing in safety, and Nvidia’s NemoClaw wraps OpenClaw with OpenShield to make agents enterprise-safe.

-

Transparency and ranking logic are shifting: Meta’s new ad labels, Google’s health moves, and LinkedIn’s feed architecture all show platforms trying to balance clarity, trust, and engagement in an AI-heavy environment.

For marketers, product teams, and developers, the message is consistent: AI isn’t just more powerful—it’s also more embedded, editable, and governed, and winning in this environment means understanding both the creative possibilities and the constraints.