Meta WhatsApp Incognito AI Chats: What the Privacy Update Means for Businesses Using AI

Meta’s decision to add incognito AI chats to WhatsApp is not just another feature update. It is a signal that privacy is becoming one of the most important battlegrounds in consumer AI adoption.

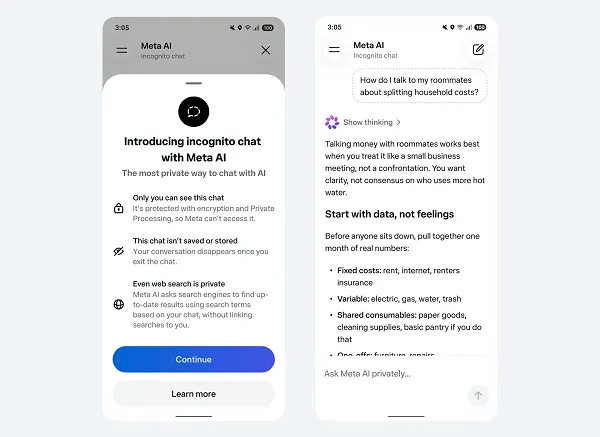

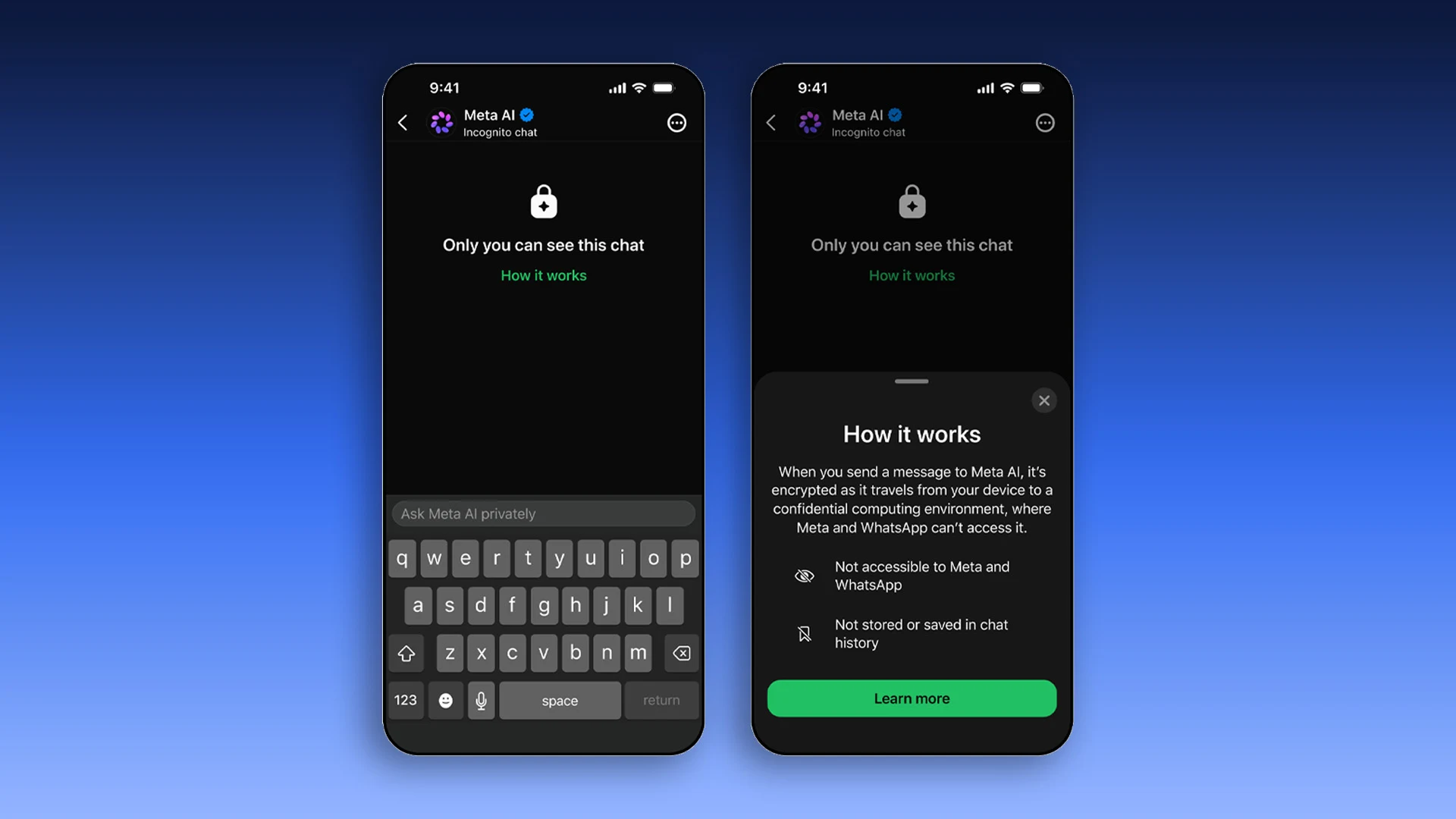

According to Social Media Today, Meta is introducing an Incognito Chat mode for Meta AI inside WhatsApp, allowing users to have private, temporary AI conversations that are processed in a secure environment and are not saved by default. The update is designed to make people more comfortable asking sensitive questions through AI, especially when those questions involve personal, financial, health, or work-related information.

For everyday users, this may feel like a simple privacy upgrade. For businesses, especially those that use WhatsApp to communicate with customers, it points to a much bigger shift: people are becoming more selective about where they share sensitive information, how AI systems process that information, and whether brands can be trusted to handle AI-assisted conversations responsibly.

If your business depends on WhatsApp for sales, support, bookings, consultations, or customer follow-up, this update should not be ignored. It changes the context in which customers will think about AI, privacy, and digital communication.

What Meta’s WhatsApp Incognito AI Chats Actually Do

Meta’s new incognito AI chat mode is designed to create a more private space for users interacting with Meta AI inside WhatsApp. In simple terms, it gives users a way to ask AI questions without those conversations being saved or made accessible in the same way standard AI interactions often are.

The key promise is privacy. Meta says that when a user starts an Incognito Chat with Meta AI, the conversation is private and temporary. Messages are processed in a secure environment, are not saved, and disappear by default. This aligns with WhatsApp’s long-standing brand positioning around private messaging, which has helped distinguish it from more public-facing social platforms like Facebook and Instagram.

That distinction matters because WhatsApp is not just another app in Meta’s ecosystem. For many users, especially in markets across Africa, Latin America, India, and immigrant communities worldwide, WhatsApp is a primary communication layer. People use it to talk to family, negotiate business, send documents, confirm payments, book services, and discuss private matters. If AI is going to live inside that environment, users need to believe it will not compromise the intimacy and trust that made WhatsApp useful in the first place.

This is why the incognito framing is strategically important. Meta is not only adding AI functionality. It is trying to reduce the psychological barrier that prevents users from asking AI more personal questions.

Why Meta Is Making AI Chats More Private

The timing of this update is important. AI chatbots are becoming more embedded in everyday digital behavior, but many users still hesitate to share sensitive details with them. That hesitation is rational. People have become increasingly aware that AI conversations may be stored, reviewed, used to improve systems, or connected to broader personalization models depending on the platform and settings.

Meta’s challenge is especially complex because it operates some of the world’s largest social and messaging platforms. The company wants AI to become a natural part of daily interaction across WhatsApp, Instagram, Facebook, Messenger, Threads, smart glasses, and its standalone Meta AI app. Social Media Today recently reported that Meta is also expanding AI access across Threads and other apps, showing that AI is becoming a central layer of the company’s product strategy rather than a side experiment.

But the more Meta integrates AI into personal communication, the more it must answer a difficult trust question: will people feel safe using AI inside spaces where they already share private information?

Incognito AI chats are Meta’s attempt to answer that question inside WhatsApp. The company appears to understand that AI adoption will not be driven by capability alone. It will also be driven by perceived safety. A chatbot can be powerful, fast, and convenient, but if users fear that their sensitive questions may be stored, analyzed, or exposed, they will limit what they ask.

For businesses, this is the first major lesson from the update: AI adoption depends on trust architecture, not just technical functionality.

The Bigger Shift: AI Privacy Is Becoming a Customer Expectation

The most important implication for businesses is that privacy is moving from a legal checkbox to a customer expectation.

In the past, many small businesses treated privacy as something buried in a policy page. Customers rarely asked detailed questions about how their data was handled unless something went wrong. AI is changing that. When customers know they are interacting with automated systems, they become more conscious of what they are sharing, who can see it, and whether the conversation could be used beyond the immediate interaction.

This is especially relevant if your business uses WhatsApp for high-trust conversations. A tax consultant, immigration advisor, therapist, legal assistant, real estate agent, coach, insurance broker, or healthcare-adjacent service provider may receive deeply personal messages from clients. Even if you are not using advanced AI yet, your customers may soon assume that businesses are experimenting with automation behind the scenes.

That assumption creates a trust gap. If customers believe AI might be involved but you do not explain how, they may become more cautious. They may share less information, avoid asking sensitive questions, or move the conversation to a competitor that communicates privacy more clearly.

Meta’s incognito AI chat update reinforces the idea that people want more control over AI conversations. Your business does not need to copy Meta’s exact feature, but you do need to understand the expectation it creates.

Why This Update Matters for Businesses Using WhatsApp

If your business uses WhatsApp as a sales or support channel, this update matters because it changes how customers may compare private AI experiences with business messaging experiences.

A customer who can open an incognito AI chat inside WhatsApp may begin to expect similar privacy clarity when messaging a business. They may wonder whether your chatbot stores their information, whether your team can see every message, whether conversations are used for training, or whether sensitive details are shared with third-party tools.

This is not only a compliance issue. It is a conversion issue.

Customers are more likely to complete a booking, request a quote, share documents, or explain their problem when they feel safe. If your WhatsApp experience feels unclear, overly automated, or careless with sensitive information, you may lose leads before they ever reach a decision stage.

For service businesses, trust is often the real sales funnel. A customer may first message you because they need help, but they choose to continue because the interaction feels safe, professional, and human. AI can support that process, but only if it is implemented with restraint.

The Privacy-Accuracy Tradeoff Businesses Need to Understand

One of the most important concerns raised by Social Media Today is that private AI processing may create challenges around accuracy and safety oversight. If conversations are not saved or reviewed, it may be harder to evaluate whether the AI is giving accurate, safe, or appropriate responses.

This is a crucial point for businesses. Privacy is valuable, but it does not automatically make AI trustworthy. A private chatbot can still give poor advice. A secure AI conversation can still misunderstand a customer’s problem. A disappearing chat can still produce a response that creates confusion, legal risk, or reputational damage.

For your business, the lesson is clear: do not treat privacy as a substitute for quality control.

If you use AI in customer communication, you need both privacy safeguards and response governance. That means deciding which questions AI can answer, which topics require human review, and which categories should never be handled by automation without professional oversight.

For example, a WhatsApp chatbot for a travel agency may safely answer questions about package availability, office hours, required documents, and appointment scheduling. But if a customer asks for immigration advice, visa eligibility interpretation, or financial guarantees, the chatbot should route the conversation to a qualified human. The risk is not simply that the AI may be wrong. The risk is that the customer may act on the answer because it came from your business.

What Small Businesses Should Learn From Meta’s Move

Meta’s update offers a strategic lesson for smaller brands: the future of AI communication will reward businesses that make customers feel in control.

You do not need Meta’s infrastructure to apply this principle. You need clear communication, thoughtful boundaries, and a customer-first approach to automation.

If your business uses WhatsApp, start by asking a simple question: when a customer messages you, do they know whether they are speaking to a person, an AI assistant, or a mix of both?

If the answer is no, you have a trust problem waiting to happen.

Transparency does not weaken your brand. It strengthens it. Customers do not necessarily reject automation. Many appreciate faster responses, instant confirmations, and 24/7 availability. What they dislike is feeling misled. If your chatbot pretends to be human, collects sensitive information without explanation, or gives confident answers outside its competence, you create unnecessary risk.

A better approach is to frame AI as a support layer. You can tell customers that your assistant helps with basic questions, scheduling, and routing, while sensitive or complex matters are reviewed by your team. That kind of clarity reduces anxiety and makes automation feel useful rather than intrusive.

How to Adjust Your WhatsApp AI Strategy

If your business currently uses or plans to use AI inside WhatsApp workflows, you should review your strategy through four practical lenses: disclosure, data handling, escalation, and customer consent.

1. Disclose When AI Is Being Used

Customers should know when they are interacting with an automated assistant. This does not require a long legal explanation. A short, clear message at the beginning of the conversation is often enough.

For example, a business could say: “You’re chatting with our automated assistant. It can help with common questions and bookings. For sensitive or complex issues, our team will step in.”

This kind of disclosure sets expectations. It tells the customer what the AI can do and reassures them that human support is available when needed.

2. Avoid Collecting Sensitive Information Too Early

Many businesses make the mistake of asking for too much information too soon. In an AI-assisted WhatsApp flow, this can feel invasive.

Instead of asking for personal documents, financial details, health information, or confidential business information at the start of the conversation, use the chatbot to qualify the request at a basic level. Once the customer’s need is clear, move sensitive information collection into a more controlled process.

This protects both the customer and your business. It also makes the experience feel more professional.

3. Create Clear Escalation Rules

AI should not handle every conversation. Your business needs clear rules for when a conversation should move to a human.

Escalation should happen when a customer asks about legal, medical, financial, immigration, contractual, crisis-related, or highly personal matters. It should also happen when the customer expresses frustration, confusion, urgency, or dissatisfaction.

A good AI workflow does not try to keep the customer trapped inside automation. It recognizes when human judgment is more valuable.

4. Review Your Third-Party Tools

Many businesses connect WhatsApp to CRM systems, chatbot platforms, automation tools, analytics dashboards, or customer support software. Each tool may have its own data practices.

Before promoting your WhatsApp channel as private or secure, understand what happens to customer messages after they are received. Are they stored? For how long? Who can access them? Are they used to train AI models? Can they be deleted on request?

These questions are not only for large enterprises. Small businesses also need answers, especially if they serve clients in regulated or sensitive industries.

Why Trust Will Become a Competitive Advantage in AI Messaging

As AI becomes more common, speed alone will stop being impressive. Every business will eventually be able to respond faster, automate FAQs, summarize conversations, and generate replies. The real differentiator will be whether customers trust the system enough to use it.

Meta’s incognito AI chats show that even the largest technology companies understand this. If users do not trust AI with sensitive questions, they will limit usage. If they limit usage, AI becomes less central to daily behavior. Privacy, therefore, is not just a defensive feature. It is an adoption strategy.

The same logic applies to your business. If customers trust your WhatsApp experience, they are more likely to share context, ask serious questions, book consultations, and move forward. If they do not trust it, automation may actually reduce conversion by making the interaction feel impersonal or risky.

This is especially important for African and immigrant-owned businesses that rely heavily on relationship-based selling. In many communities, WhatsApp is not just a communication tool. It is a trust channel. People message businesses because they want direct access, personal reassurance, and fast answers from someone they believe understands their context. If AI is introduced carelessly, it can weaken that relational advantage. If introduced thoughtfully, it can help you respond faster while preserving the human trust that makes your brand valuable.

The Risk of Over-Automating Private Conversations

One of the biggest mistakes businesses can make is assuming that because customers use WhatsApp casually, they will accept fully automated communication there.

WhatsApp feels personal. That is its strength. But it also means customers may react negatively when a business turns the channel into a cold, bot-driven funnel. The more private the platform feels, the more careful your automation should be.

Meta’s incognito AI update reinforces this emotional reality. People want AI help, but they want it in a controlled space. They want convenience without exposure. They want answers without feeling watched. They want personalization without surrendering too much personal data.

Businesses that ignore this tension may damage trust. Businesses that understand it can design better customer journeys.

For example, instead of using AI to push aggressive sales messages, a service business could use AI to organize incoming inquiries, answer basic questions, and prepare the human team with a summary before they respond. The customer still gets speed, but the relationship remains human where it matters.

What This Means for AI-Powered Customer Support

Customer support is one of the most obvious use cases for WhatsApp AI, but it also carries significant risk.

A support chatbot can reduce response times, answer repetitive questions, and help customers outside business hours. But support conversations often include frustration, urgency, and sensitive details. If the AI gives generic answers or fails to understand emotional context, the customer experience can deteriorate quickly.

The best approach is not full replacement. It is assisted support.

AI can handle first-line triage: identifying the issue, collecting non-sensitive details, checking order status, providing standard instructions, or routing the customer to the right department. Human agents should handle exceptions, complaints, nuanced decisions, and high-value customer interactions.

This hybrid model is more sustainable because it uses AI where it is strongest and human judgment where it is most needed.

What This Means for WhatsApp Marketing

For marketers, Meta’s incognito AI chats point to a broader privacy-sensitive future for messaging campaigns.

WhatsApp marketing already requires more restraint than public social media marketing. Users are less tolerant of spam in private messaging environments. If AI-generated campaigns become too frequent, too personalized, or too obviously automated, customers may opt out or block the business.

The opportunity is to use AI for relevance, not intrusion.

Instead of blasting generic promotions, businesses can use AI to segment inquiries, personalize follow-up based on stated interests, and help teams respond with more useful information. But personalization should be based on consent and context, not hidden data extraction.

A good WhatsApp marketing strategy should feel like helpful service, not surveillance.

How Businesses Can Communicate AI Privacy Clearly

You do not need complex language to communicate your AI privacy approach. In fact, simple language is better.

Your WhatsApp introduction, website contact page, or customer onboarding message can explain three things: when AI is used, what information is collected, and when a human will take over.

For example:

“We use an automated assistant to help answer common questions and schedule appointments. Please avoid sending sensitive documents until a team member requests them. Complex or private matters are handled by our staff.”

This kind of message does several things at once. It normalizes automation, protects the customer, reduces your liability, and positions your business as responsible.

If your business serves clients in industries where confidentiality is central, you may need a more detailed privacy notice. But even then, the goal should be clarity, not legal complexity.

The Strategic Opportunity for Smaller Brands

Large platforms like Meta are building privacy features because they know trust will determine AI adoption. Smaller businesses can learn from that without needing enterprise-level technology.

Your advantage is proximity. You can communicate with customers more personally than a global platform can. You can explain your process, set expectations, and provide human reassurance. You can use AI to improve responsiveness without making customers feel like they are being processed by a machine.

That is where smaller brands can compete.

The businesses that win with AI will not be the ones that automate the most. They will be the ones that automate the right parts of the customer journey while protecting the trust that drives revenue.

Practical Checklist: What to Do Now

If your business uses WhatsApp and is considering AI, use this checklist to evaluate your readiness:

Review Your Current WhatsApp Workflow

Map what happens from the moment a customer sends the first message. Identify who sees the message, where it is stored, what tools process it, and how long it remains accessible.

Identify Sensitive Conversation Categories

List the types of customer questions that should not be handled entirely by AI. This may include pricing negotiations, complaints, legal questions, financial details, medical information, immigration matters, or confidential business issues.

Add a Clear AI Disclosure

Tell customers when automation is involved. Keep the language short, practical, and visible at the beginning of the interaction.

Create Human Handoff Rules

Decide when AI should stop responding and a person should take over. Make this part of your operating process, not an occasional judgment call.

Train Your Team

Your staff should understand how AI is being used, what it can and cannot answer, and how to correct or intervene when necessary.

Audit Your Tools

Check the privacy and data retention policies of any chatbot, CRM, automation, or analytics platform connected to WhatsApp.

Avoid Overpromising Privacy

Do not claim that conversations are fully private, encrypted, temporary, or not stored unless you can verify that across every tool in your workflow.

The Bottom Line: Privacy Is Now Part of the AI Product Experience

Meta’s WhatsApp incognito AI chats show where the market is heading. AI tools are becoming more powerful, but users are also becoming more cautious. They want the benefits of AI without losing control over their private information.

For businesses, this creates both pressure and opportunity. The pressure is that you can no longer treat AI automation as a behind-the-scenes operational choice. Customers will increasingly care about how it works. The opportunity is that responsible AI communication can become a trust signal.

If your business uses WhatsApp, the smartest move is not to rush into full automation. It is to design a messaging experience that is fast, clear, privacy-aware, and human where it matters most.

AI can help you respond better. But trust is what makes customers continue the conversation.